AI Governance in India: Law, Liability and the Battle for Algorithmic Accountability

The statutory backbone of AI governance in India is constituted by an interplay of existing laws and sector specific regulations.

At the core of India’s AI governance architecture is a principle-based model, issued by MeitY

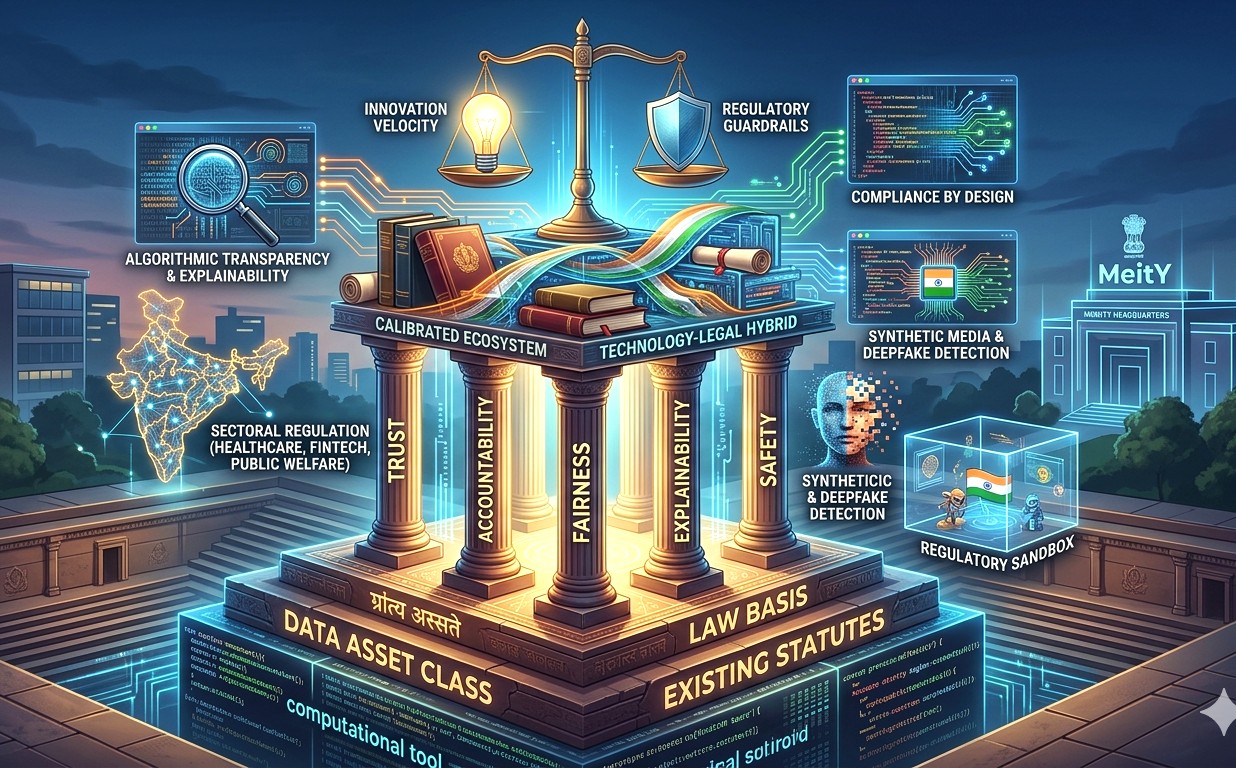

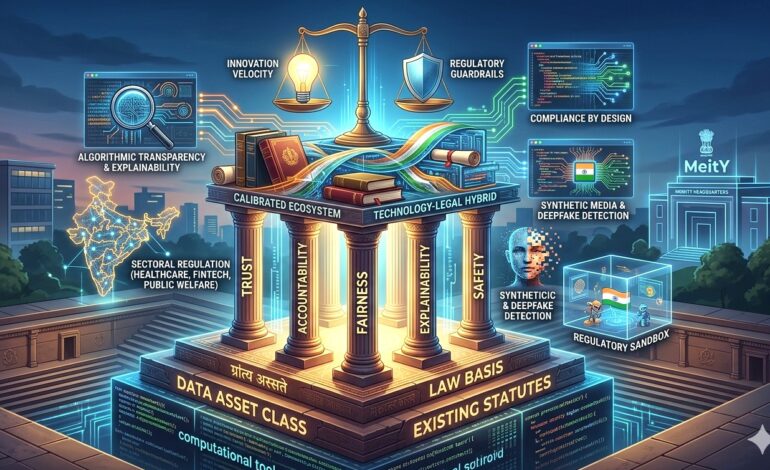

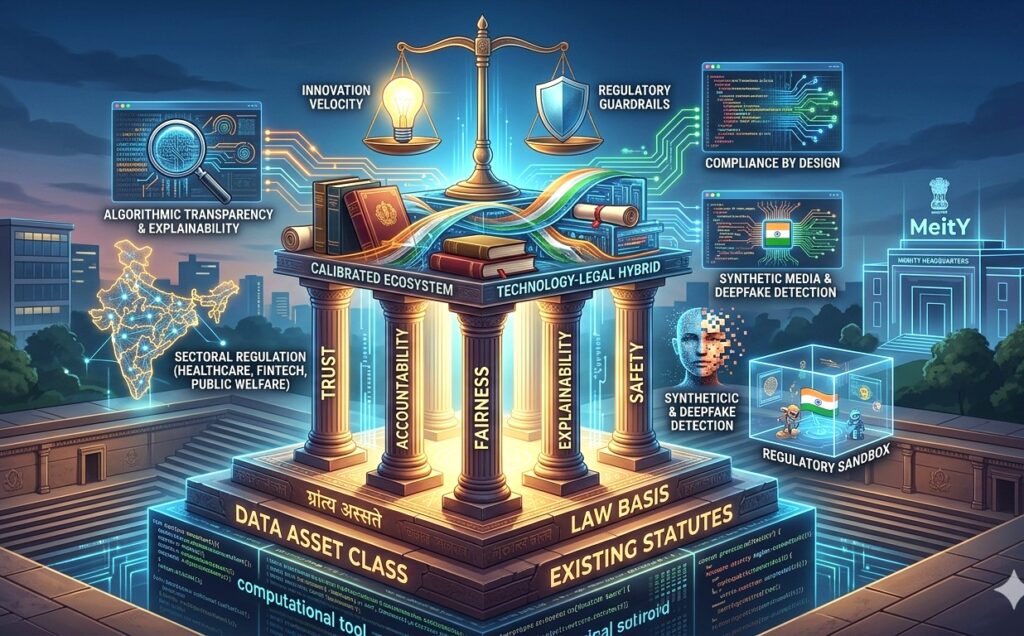

AI has transitioned from a computational tool to a foundational layer of decision-making infrastructure across sectors and digital platforms. This has compelled a reconfiguration of legal jurisprudence, to move from human centric accountability models toward a hybrid techno legal framework, that accommodates algorithmic autonomy. The Indian regulation, as it stands in 2026, is neither codified into a single biblical statute nor is entirely laissez faire. It is a carefully calibrated ecosystem that blends statutory law, executive guidelines, and sectoral regulation to govern AI systems while preserving innovation velocity.

At the core of India’s AI governance architecture is a principle-based model, issued by MeitY. These Guidelines, establish a normative framework rooted in trust, accountability, fairness, explainability, and safety, while explicitly endorsing an “innovation over restraint” philosophy. This approach reflects India’s strategic positioning between the prescriptive, risk-classification heavy EU model and the market-driven, sector led regulatory approach of the USA. Rather than imposing ex-ante compliance burdens, India’s framework relies on a combination of ex post liability, due diligence obligations, and embedded design governance, thereby aligning legal compliance with system architecture itself (compliance by Design).

The statutory backbone of AI governance in India is constituted by an interplay of existing laws and sector specific regulations. This ecosystem directly impacts AI systems that rely on large scale data ingestion for training and inference, transforms data from a mere technical input into a legally regulated asset class, requiring traceability, lawful basis of processing, and accountability across the AI lifecycle. Consequently, organizations deploying AI must embed data governance protocols into model design, including anonymisation standards, consent architectures, and retention controls, thereby integrating legal compliance into the technical stack itself.

Liability, however, remains the most complex and evolving dimension of AI governance. Traditional legal systems are premised on identifiable human actors, i.e., manufacturers, service providers, or intermediaries, yet AI systems introduce distributed agency across developers, deployers, and users. The law is gradually responding by moving toward a shared liability model, wherein responsibility is apportioned across the AI value chain. The Law, imposes obligations on platforms to monitor, remove, and mitigate unlawful content, including AI generated outputs such as deepfakes. Further the synthetically generated content falls squarely within the regulatory ambit, eliminating ambiguity regarding jurisdiction over AI-generated harms.

This evolution signals a doctrinal shift from fault based liability to a risk based and duty of care frameworks. Under such an approach, liability may arise not merely from direct causation but from failure to implement adequate guardrails, testing protocols, or transparency measures. An AI driven recommendation engine that produces biased or discriminatory outcomes could trigger liability under the unfair trade practices, or under constitutional principles where state deployed AI systems infringe fundamental rights. Simultaneously, the absence of explicit statutory provisions for autonomous decision making systems creates interpretive challenges, particularly in attributing intent, negligence, or foreseeability in algorithmic conduct.

AI systems trained on vast datasets, often raise questions regarding lawful use, fair dealing, and ownership of AI generated outputs and the current position is not fully equipped to address issues concerning authorship of machine generated works or the legality of large scale data mining for model training. Policymakers have acknowledged the need for reform, particularly to balance innovation incentives with protection of creators’ rights, potentially through text and data mining exceptions or licensing frameworks. This tension between proprietary rights and open innovation is likely to define the next phase of AI regulation in India.

A critical emerging dimension of AI governance is algorithmic transparency and explainability. The Indian framework increasingly emphasises understandable by design systems, requiring AI outputs be interpretable, auditable, and contestable. This is particularly relevant in high impact domains such as credit scoring, healthcare diagnostics, and public welfare distribution, where opaque decision making can lead to systemic bias or exclusion. However, this requirement must be balanced against trade secret and proprietary algorithmic models, and commercial confidentiality.

Equally significant is the regulation of synthetic media and deepfakes, which exemplify the societal risks posed by advanced AI systems. India’s regulatory response has moved toward mandatory labelling of AI generated content and enhanced due diligence obligations for intermediaries, aimed at preserving informational integrity and public trust. These measures indicate a shift toward content provenance frameworks, including watermarking and authentication mechanisms, although their technical limitations and susceptibility to circumvention remain acknowledged challenges.

From a governance perspective, India’s approach is best characterised as a “techno legal hybrid,” where regulation is not confined to statutory enactments but extends into institutional mechanisms such as regulatory sandboxes, AI safety institutes, and multi stakeholder advisory bodies. This model recognises that AI evolves at a pace that outstrips traditional legislative cycles, necessitating adaptive governance structures capable of iterative policy development.

Notably, India has consciously deferred the enactment of a standalone AI law, opting instead to leverage and adapt existing legal frameworks while allowing regulatory principles to mature organically.

Looking forward, the future of algorithms in India will be shaped by three converging forces, regulatory consolidation, technological standardisation, and global alignment. The anticipated trajectory includes formal codification of AI governance principles into binding legislation, harmonisation with global standards such as the OECD AI Principles and ISO frameworks, and increased emphasis on accountability mechanisms such as algorithmic audits, impact assessments, and certification regimes. At the same time, the rise of autonomous and generative AI systems will necessitate a rethinking of foundational legal concepts such as agency, liability, and authorship, potentially leading to novel doctrines tailored to machine intelligence.

Governance concerning AI, in India, is at a formative, yet decisive stage, characterised by a deliberate balancing of innovation and regulation. The existing framework reflects a pragmatic recognition that AI cannot be regulated through rigid, siloed statutes. It requires an integrated legal architecture that evolves alongside technological advancement.

The challenge for policymakers, practitioners, and businesses alike is to operationalise this framework into enforceable, auditable, and ethically grounded practices that ensure AI systems remain not only powerful but also lawful, accountable, and aligned with constitutional values.